Netflix launched its rental service in April 1998. I was running PowerSurge at the time, deploying dedicated servers and trying to make DNS manageable for people who should not have to love raw BIND configs. We were on the same internet, building entirely different things. They were betting the network was good enough to manage the logistics of physical media. I was betting the network was good enough to run a hosting business. We were both right, and both bets worked for a while.

Neither of us was betting the network was good enough to deliver video. Because in April 1998, it was not.

Part of a series on what the DotCom era can teach us about AI. ← Previous: Amazon, E-Commerce, and the Rewiring of Retail · Next: Smartphones Made the Internet Ambient →

The series so far has argued two things that matter for this post. One, that the DotCom bubble destroyed enormous investor capital whilst financing infrastructure. Transport, hosting, payments, search. Everything built afterwards depended on that foundation. Two, that the rewiring of retail took two decades and required search, logistics, trust, and generational habit change to align before Amazon’s shape was obvious. Streaming is the next case in that pattern, and the cleanest historical parallel to what current AI is trying to become. It was a substrate transition that ran on top of bubble-era infrastructure, and it took a full decade longer than anyone at the time expected.

None of this was free. The fibre that eventually carried Netflix into a living-room TV was laid during the DotCom overbuild, which I watched from the inside. Telecoms and backbone operators raised enormous capital in 1999 and 2000 against revenue forecasts that would not materialise for another decade, went bankrupt, and left behind transport capacity that later buyers acquired at cents on the dollar.

But dark fibre alone was not what made streaming viable. What multiplied the value of that buried glass was dense wavelength-division multiplexing, the optical-layer engineering that pushed the carrying capacity of every existing strand by orders of magnitude. DWDM did not arrive on a smooth curve. It arrived in step changes, and the step changes were larger than the roadmaps had implied. The bubble paid for the pipes. A decade of unglamorous engineering on top of those pipes was what actually moved the goalposts.

The AI buildout is showing the same shape. Capacity matters. Efficiency on top of the capacity matters more, and it tends to arrive in jumps. DeepSeek demonstrated that frontier-quality output could be produced at a fraction of the assumed compute cost. Blackwell’s gains over its predecessor were larger than the linear roadmaps had suggested. Neither was a smooth tick on a curve. Both were the kind of efficiency leap that resets what the same underlying capacity can deliver. The pipes for AI are being laid right now. The DWDM equivalents have not all arrived yet, and we should not assume we have seen the biggest of them.

Streaming is a uniquely bandwidth-hungry application, which is why the pipes mattered. Broadcast television sends one signal to many viewers. Streaming sends an individual copy to every subscriber, in real time, at whatever bitrate their connection can sustain. That one-to-one delivery model is brutally expensive in transport terms, and it arrived just as U.S. ISPs were introducing home bandwidth caps to push back against traffic they had not planned for. The backbone capacity inherited from the bubble was what made the economics even tentatively viable. Without it, streaming would have been choked in its first serious year.

That is the pattern this series keeps coming back to, and it is the pattern I expect to repeat with the current AI buildout. The investors who fund the capacity may not be the investors who see the returns, but the capacity becomes real, and everything that comes after depends on it being there.

That gap between what the internet could manage and what it could actually deliver is the whole story of how streaming happened. Most accounts skip it. They run a straight line from “internet existed” to “Netflix killed Blockbuster” and miss the fifteen years of conditions that had to close in between. Those conditions, and the order in which they closed, are worth understanding carefully. Not for nostalgia. Because the same sequence is playing out with AI, and most of the coverage is making the same mistake of drawing the line too straight.

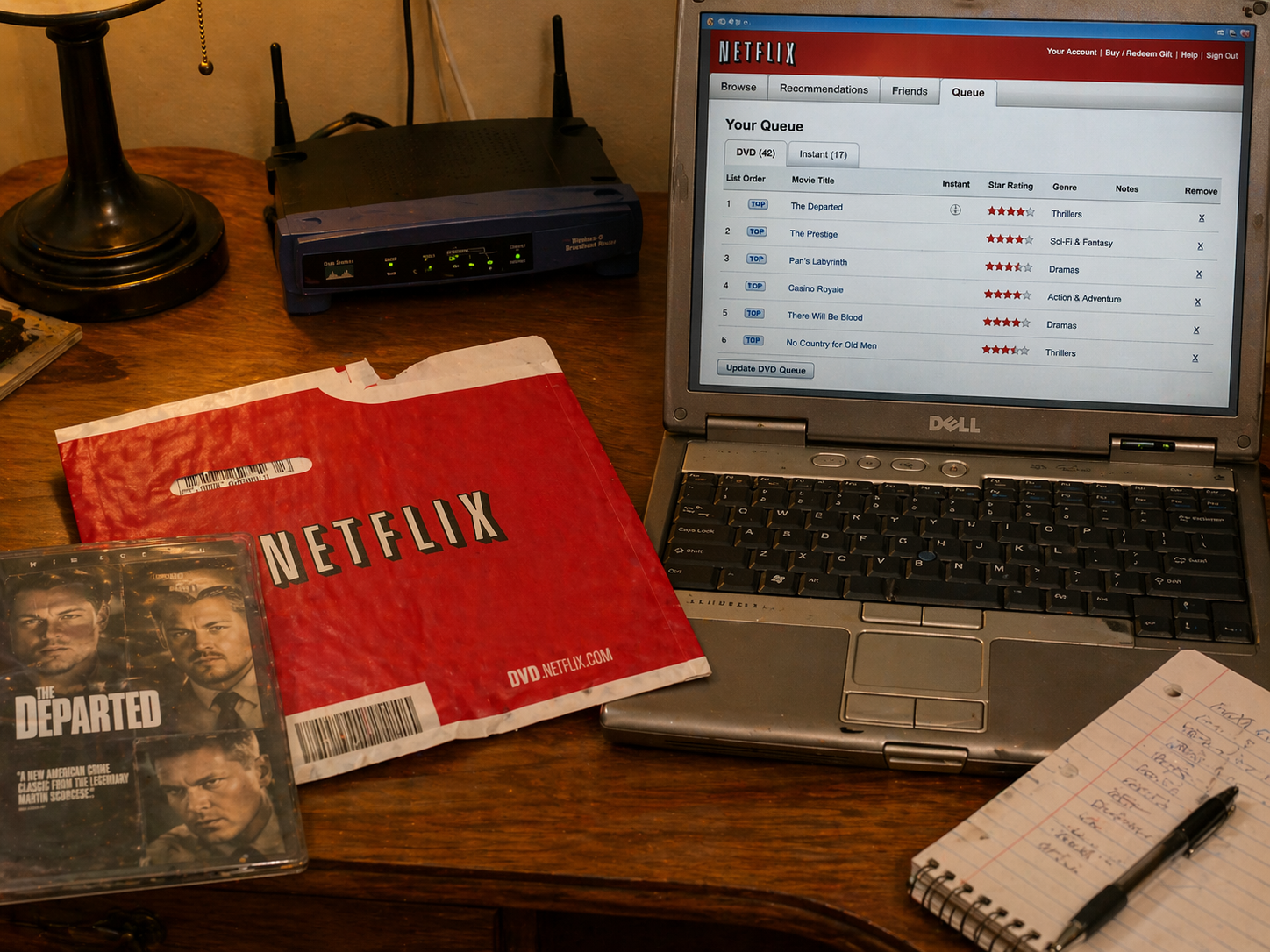

The Red Envelope Was the Prototype

Netflix spent its first nine years as a postal service.

By April 2007 it had 6.8 million subscribers, and that business was almost entirely physical. A large catalogue, a queue system, no late fees, and a fulfilment network that could reach most U.S. subscribers with one-business-day disc delivery. What the internet had contributed to that model was not video delivery. It had made the logistics of physical media dramatically better than a rental store ever could. You browsed online, managed a queue, received mail, returned mail. The discovery and fulfilment were digital. The content moved on polycarbonate.

I had customers in that period running small-scale media services on dedicated servers. People trying to stream audio, experiment with video, build early download stores. Most of them ran out of bandwidth budget before they ran out of ideas. Transit was expensive, peering took months to arrange, and home internet caps in the U.S. were only starting to appear as ISPs tried to slow the growth of sustained traffic that was outpacing their assumptions. I could see the Seattle skyline from my bedroom window, and I was still rural enough that when cable internet finally arrived, the upstream channel ran over a dial-up modem. Connectivity was not guaranteed, and even when you had it the experience varied wildly. The disc, in that environment, was not a compromise. It was the only delivery mechanism that did not require you to renegotiate peering every month or ask your subscribers to watch their monthly cap.

Streaming launched quietly inside that business in January 2007, as a bonus feature. Netflix’s own 2009 filings still described the DVD-and-streaming bundle as the core product, whilst roughly 2 million discs shipped daily. By the end of 2009, 48 percent of subscribers had watched at least fifteen minutes of streaming content, real adoption running parallel to a still-larger physical business. The service ran in that mode for years, because streaming was real but not ready to carry the full weight.

That sequencing is undervalued in most retrospective accounts. The internet did not replace physical video in one move. It first made physical video radically more convenient, built a subscriber base and a recommendation engine on that convenience, and only later became reliable enough to replace the disc itself. The red envelope was not a primitive version of something that streaming improved. It was a different product that solved a different problem, and its success built the base from which streaming grew.

AI is in the red envelope era right now.

Current AI tools are genuinely useful. They solve real friction. For a meaningful range of tasks, they are clearly better than what preceded them. But the chat interface, the browser tab you switch to, the API you call separately from everything else: those are the queue management and postal fulfilment of the AI story. The underlying capability is real. The delivery mechanism is provisional.

The internet managed disc logistics in 2007 not because that was the ideal answer, but because it was the best answer available given the infrastructure. Current AI interfaces are roughly the same: the best available answer given current infrastructure, and almost certainly not the final form.

What Had to Become True

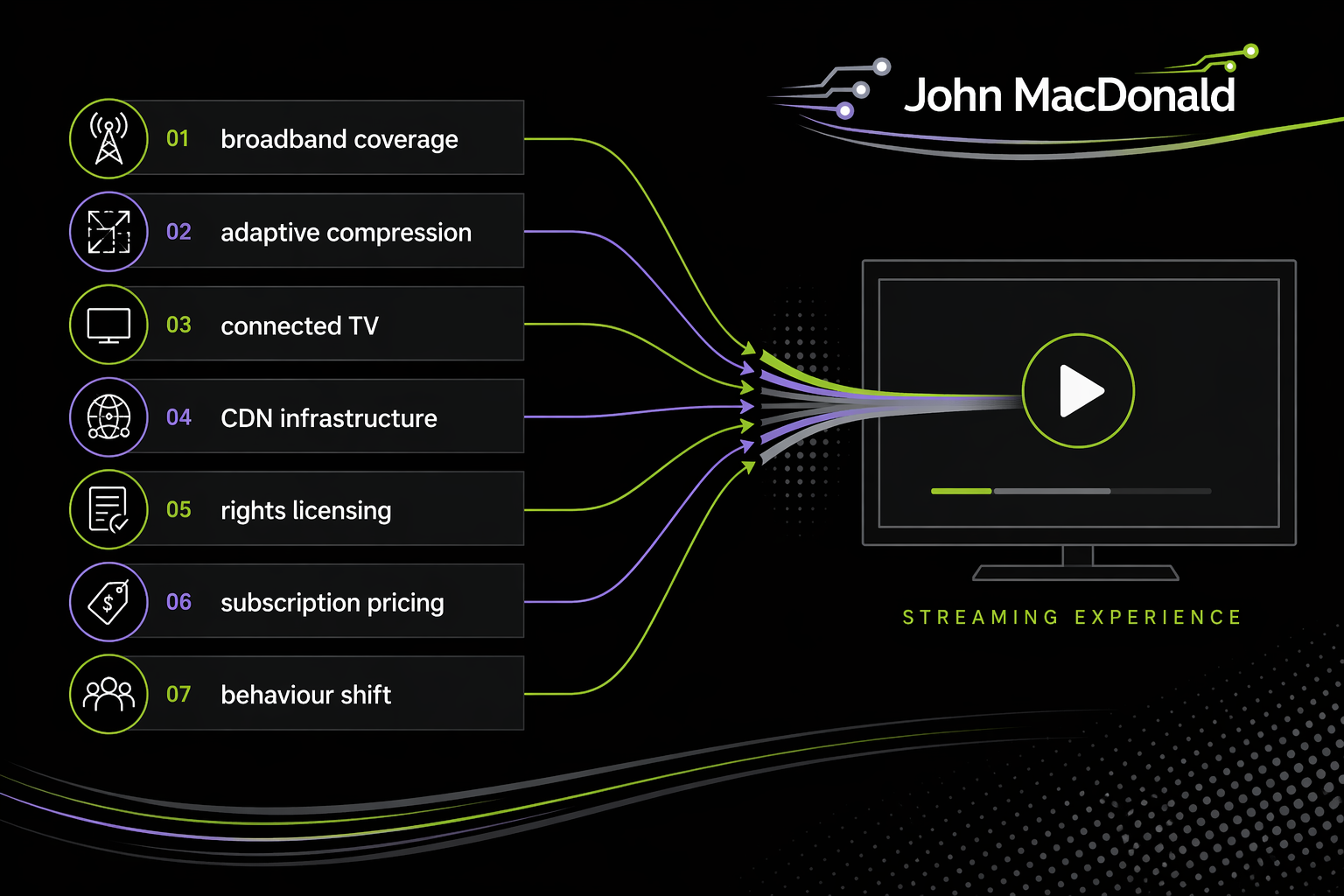

Broadband is the answer most people reach for when asked why streaming took until around 2010 to become the dominant delivery model. Broadband is not wrong. It is incomplete to the point of misleading.

U.S. household broadband moved from 47 percent of adults in early 2007 to 66 percent in 2010 and 70 percent in 2013. Those numbers mattered. But raw broadband subscription figures were not the binding constraint by then. What mattered was whether the service was reliably good enough, on real consumer connections at real evening peak times, to sustain a watching session rather than an experiment.

Several things had to become true at roughly the same time.

Compression and adaptive delivery had to improve. H.264 encoding materially raised the quality ceiling at a given bitrate. HTTP-based adaptive streaming made video resilient on fluctuating consumer connections, adjusting resolution dynamically rather than stopping to buffer. These were not consumer-visible features. They were infrastructure changes that made the consumer experience possible.

The television had to become internet-connected. This is the step most accounts treat as incidental. It was the hinge. Connected-TV penetration in U.S. households was 24 percent in 2010, 38 percent in 2012, and 49 percent in 2014. By 2014, nearly 80 percent of Netflix streaming was happening on a TV set. Before that threshold, streaming was something you did at a desk. After it, streaming was what you did on the couch. The behaviour did not change because the technology got better in the abstract. It changed because the technology reached the right room.

Chromecast shipped in July 2013. Amazon’s Fire TV followed in April 2014. Those devices did not invent connected-TV capability. Smart TVs were already on the curve. But they collapsed the price and the setup friction. For thirty-five dollars you could turn any reasonably recent television into a streaming endpoint without buying a new set. The connected-TV hinge did not close when the first smart TVs shipped. It closed when the cheapest path from an ordinary television to a streaming service dropped below the cost of a single month of cable.

The AI equivalent is worth watching for. The device, browser extension, or editing surface that collapses the friction of getting capable AI into the tool where someone already works has not arrived yet. When it does, the usage curve will not be subtle.

The delivery infrastructure had to scale to the actual demand. By spring 2011, Netflix represented roughly 30 percent of North American peak downstream traffic, and by autumn that figure had risen to 32.7 percent. You cannot serve that share of peak traffic from a central cloud without very deliberate decisions about where the bits physically live. Netflix launched its Open Connect programme in October 2012, placing servers directly inside ISPs and internet exchange points. The network had to be re-engineered around the demand, not just the capacity.

Rights licensing had to evolve, or rather be forced to. Netflix’s 2008 annual report included a direct warning that streaming content was not protected by the first-sale doctrine and depended entirely on revocable licences. A DVD could be purchased, owned, and lent. A stream could be recalled overnight. The Starz deal expiring in early 2012, removing a meaningful content chunk immediately, illustrated what that leverage meant in practice. The streaming catalogue was structurally less stable than the disc library, and everyone inside the business knew it.

Then there was the psychology of the pricing. The $7.99 streaming-only plan launched in November 2010 reframed the question. Not: DVDs with a bonus feature. Instead: unlimited instant access for the price of a modest monthly utility. By January 2011, more than a third of new Netflix subscribers were choosing the streaming-only option. The framing change mattered as much as the price point.

Now run the same checklist against AI.

Reliable inference at reasonable cost is the broadband equivalent. At the commodity layer, costs are falling. At the frontier, they are moving the other way. In April 2026, GitHub Copilot shifted from vague per-request quotas to actual token metering, with some users reporting their effective usage cut by as much as 30x at the same price. OpenAI began routing new functionality almost exclusively through Codex, which runs on a metered token quota rather than the unmetered ChatGPT subscription. Anthropic introduced peak-window rate limits on Claude while Opus 4.7 consumes more tokens per task than its predecessors. DeepSeek is the notable exception, still pushing prices down with the R4 release. None of this looks like a settled market. It looks like operators trying to figure out unit economics in real time, with cash burning at a rate that would feel familiar to anyone who lived through 2000.

The 2007 broadband parallel is sharper than it might look. Access existed. It was the metering, the caps, and the overage pricing that made sustained use expensive enough that ordinary users felt every minute of it.

Workflow integration is the connected TV. AI is still largely a separate thing you go to. The behavioural shift will come when it is embedded in the tools where work actually happens: the IDE (where the integration is furthest along), the document editor, the CRM, the hospital records system. Not a new tab to open. The same environment where the work already lives. Before that integration, AI is something you use at the desk. After it, AI is something you use without noticing the switch.

Resilience under real conditions is the adaptive streaming equivalent. AI tools that perform well on benchmarks and degrade badly at the edges of real tasks are still in the pre-H.264 era. The performance on controlled demonstrations is impressive. The performance when a real user pushes a real workflow to its edges is still the story the benchmarks are not telling.

Regulatory and trust frameworks are the rights licensing. There is no settled model for IP in training data, liability for AI-generated output, or sector-specific constraints in medicine and law. These are not administrative delays. They are structural conditions that will determine what the AI catalogue can contain and how stable it will be.

Subscription psychology for AI has not fully arrived. Most people still experience AI tools as something they pay for separately, access separately, and evaluate separately from everything else they do. The interface that makes AI feel like a utility rather than a product, present by default instead of fetched on request, has not reached mainstream deployment.

Tracking broadband adoption was the wrong single variable for predicting when streaming would win. Tracking model quality is probably the wrong single variable for predicting when AI becomes embedded behaviour. The checklist matters more than any single item on it.

Blockbuster Tried

Blockbuster had 4,855 U.S. stores as of January 2008. It also had a by-mail service, digital downloading partnerships, on-demand deals with device manufacturers, and a programme that let mail subscribers exchange discs at physical locations. It was not doing nothing.

It was doing things from the wrong cost base.

The store network that had been a competitive moat became a fixed liability when the traffic moved online. The debt load, over $900 million at the time of its September 2010 bankruptcy filing, was carried by a business that needed sustained store-level revenue to service it. Netflix was building fulfilment infrastructure for physical media whilst simultaneously investing in streaming delivery, without a legacy estate consuming the margin.

Blockbuster did not miss the direction. Their filings from that period describe the by-mail service, digital downloads, on-demand partnerships, and in-store disc exchange they ran in parallel. The problem was not seeing where things were heading. The problem was reacting from a cost base built for a different era.

That failure pattern recurs reliably. The incumbent is not usually blind. It is usually trapped. Trapped by infrastructure that was a moat yesterday and is overhead today. Trapped by a customer base that still wants the old thing, until suddenly it does not. Trapped by the financial commitments made to sustain the old model whilst trying to fund the new one.

The parallel for AI is not abstract. A professional services firm whose billing model depends on junior staff hours, a consulting practice built on research that capable AI can now do in minutes, a law firm whose leverage ratio requires first-year associates doing document review: these are organisations approaching AI from something close to Blockbuster’s position. Not because they are ignorant of the direction. Because their cost structure and financial commitments constrain how fast they can actually move, regardless of what they put on slides.

The question is not whether those organisations see what is coming. It is whether their overhead can absorb the transition before the transition decides for them.

From Schedule to Control

The consumer behaviour shift that made streaming irreversible was not primarily about convenience. It was about a different relationship with time.

Store rental had a return window. DVD-by-mail had a queue cycle measured in days. Early streaming still carried a mental frame of watching as something you planned. What changed between roughly 2010 and 2013 was that watching something on your own schedule, pausing across evenings, starting the next episode immediately because it was already there, stopped feeling like indulgence and started feeling like normal.

By 2013, survey data found 88 percent of Netflix users reporting they had watched three or more episodes of the same show in a single day. Netflix’s decision to release all thirteen episodes of House of Cards at once in February 2013 was not only a marketing choice. It was an argument that control of schedule was itself a product feature. The algorithm stopped being a discovery tool and started being a relationship.

The shift from schedule to control is the behavioural precedent most relevant to AI.

Most AI usage today is transactional and deliberate. You identify a need, switch to an AI tool, make a request, take the output back to whatever you were doing. That is roughly equivalent to using a DVD rental store in 1999: functional, somewhat better than the alternative, but requiring a mode switch and a conscious decision each time.

The embedded version is different. When AI is present in the tool you are already using, when it can see the context of the work you are doing without being explicitly fed it, when its suggestions appear without a trip to a separate interface, the relationship shifts from tool to environment. That is the connected TV moment. That is the shift from schedule to control. Parts of software development are approaching that threshold now. Most professional domains are not there yet.

We do not know when the rest of the checklist closes. But the AI tools building daily active usage now, in the current transactional era, are building the subscriber base that the next form will inherit. Netflix’s DVD users were not a legacy problem that streaming had to overcome. They were the base from which streaming adoption grew. The distinction matters for thinking about which current AI products are building something compounding.

The Originals Problem

Streaming had a structural fragility that the DVD business did not.

A disc could be purchased under the first-sale doctrine, owned, and monetised across many rentals without further payment to the studio. A stream required a licence, and that licence could be withdrawn. When the Starz deal expired in early 2012, the content disappeared from Netflix’s catalogue overnight. What that illustrated was that a streaming service dependent entirely on third-party rights was always one negotiation away from a depleted library.

Original programming was not primarily a prestige move. It was a response to structural vulnerability. Content Netflix created was content Netflix owned. The subscriber relationships, the recommendation engine, the viewing habits built on that content: all of it was more defensible when the underlying material could not be recalled by a licensor. By 2013 Netflix was using originals as a direct test of a licensing model the company could actually control.

I came out of media recently enough to have watched the next chapter up close. By the mid-2010s every serious streaming player had concluded that licensed catalogue was a bridge, not a destination. Netflix built Netflix Studios and by the early 2020s was producing more original content annually than most traditional studios. Apple launched TV+ in November 2019 with originals from day one, skipping the licensed-catalogue phase entirely. Amazon closed its acquisition of MGM in March 2022 for $8.5 billion, folding a hundred-year-old studio and the Bond franchise into Prime Video. The platforms had stopped being tenants in someone else’s catalogue and started being the studios themselves.

I was at Disney when Disney+ launched in November 2019. What the outside saw was a service going from zero to tens of millions of subscribers faster than any launch in the history of media. What the inside saw was every content team, every production pipeline, every post house, every localisation team absorbing the weight of a subscription product that demanded continuous new material to justify its price. The brief was content, content, content: dramatically more hours, produced at meaningfully lower cost per hour than the legacy theatrical model had assumed. That collided with how the company had always done things, particularly on the Marvel side, where “this is the way WE do it” met cost structures the new product could not absorb. Then the pandemic hit four months later and compressed the timeline further. Teams scaled, reorganised, scaled again. Content commitments made against bullish forecasts had to be fulfilled regardless of whether the economics were settling. It was a period of real work and real strain, and it is worth naming because the scale-up story in a substrate transition is not free. Somebody pays for it in sustained pressure, long before the market decides whether the bet worked.

The incumbents did not miss streaming. They responded. Disney+ in 2019, HBO Max in 2020, Paramount+ in 2021. Disney+ took years to reach profitability. Most of the others are still working through it, trying to find the right scale to compete with platforms that had a decade of streaming-native learning and vastly more capital to absorb the content spend. What the streaming-natives knew that the studios had to learn expensively was a stack of things: interface design, ease of use, recommendation systems, and the unit economics of producing content at streaming volume rather than at theatrical-release volume.

The next chapter is consolidation. Skydance has acquired Paramount, and further acquisition activity continues to reshape who owns what across streaming, studio, and linear. Warner Bros. Discovery, itself a product of recent consolidation across studios, cable, and streaming, is part of that ongoing story. How aggressively these new ownership configurations bind streaming to studio to linear will be one of the more revealing experiments of the next two years. The studios themselves are the second-order question: whether the platforms-as-studios pattern absorbs another major incumbent, or whether the studios-as-platforms pattern starts pushing back.

That is a different shape of disruption than the original Blockbuster story. The incumbents were not blindsided. They were forced into a game whose economics had been written by their former tenants.

The AI parallel is not theoretical, and the vertical-integration pattern is already running fast. Anthropic, OpenAI, and their peers are increasingly not just providing capability but building the products on top of it. The same pattern shows up more broadly. SpaceX and xAI are vertically integrating hardware, infrastructure, and application layers under common ownership. The AI acquisition market in 2025 and 2026 has seen deals where the headline number looked impossibly high for the underlying IP, because the real purchase was the team. The announced $60 billion Cursor acquisition, if it actually closes, is primarily a talent buyout dressed as an IP transaction. These are not ordinary talent acquisitions routed through recruiting. They are companies paying studio-acquisition prices to secure the people who can build the next layer up.

Organisations currently renting AI capability from foundation-model providers are in the Starz-era streaming-service position. Real capability, on terms someone else controls. The model providers, meanwhile, are doing what Netflix did. Moving upstream into the product layer. The question for every organisation in the middle is whether they are building something of their own the studio above them cannot simply build or buy. Strategic depth, in this era, means owning something. Data, workflow, relationship, brand. Anything that is not a rewrapped call to somebody else’s API.

The Buffering Era

In 2007 and 2008, streaming worked badly often enough that using it for anything important felt unwise. The picture would drop to a low resolution. The spinner would appear mid-scene. Some evenings it simply did not work. People used it anyway, because imperfect streaming was still genuinely useful for a lot of content. They kept the DVD subscription alongside it, because the disc still won on reliability for anything that mattered.

This is AI in 2026, rendered almost exactly.

The hallucinations are frequent enough to be normal. Context limitations cause real problems at the edges of real tasks. The model confidently produces something wrong often enough that handing output to anyone without checking carries real risk. People use it anyway, because it is genuinely useful for a wide range of work. They keep the human process running alongside it, because for anything that matters, the human still wins on reliability.

The buffering era ends. It always does. The question it leaves behind is not about the technology. The question is which applications become embedded habits before the buffering ends, and which are still waiting for a reliability threshold they have not yet hit.

Netflix’s streaming usage habits were built during the unreliable years on a base of DVD subscribers. The users who had learned to navigate the service, trained the recommendation algorithm with their viewing history, and figured out which content worked and which did not: those users had a compounding advantage when quality improved. They did not start over when streaming got good. They continued.

The organisations and practitioners building systematic workflows around current AI tools, figuring out which tasks reliably deliver value, encoding what works and what does not into repeatable processes: those are the people building on the right side of the buffering era. Not because the current tools are definitive. Because the process knowledge and workflow design built now carry forward.

How to Ride Out a Substrate Transition

People ask me what to actually do about AI, in a career or a business, right now. The streaming transition is the cleanest historical parallel available, and the answer I keep coming back to is shaped by it.

You are watching a substrate change. The preconditions are closing unevenly. Some faster than most people expect, some stuck. The capital going into the build is historically large, some of it will be lost, and the people who did best through the DotCom and streaming transitions were rarely the ones who bet biggest. They were the ones who stayed in the game long enough to compound.

A short list, in the order I would use it.

Watch the checklist, not the hype cycle. The question that actually matters for your category is which preconditions are still missing for embedded AI behaviour, and when they might close. For software development, most of the checklist has already closed. For medicine, law, and education, the reliability and regulatory items are still open and the timeline is genuinely uncertain. Tracking a single headline metric like model quality, adoption percentage, or enterprise spend will mislead you. Tracking the specific conditions that have to close in your context will not.

Carry the legacy that is still paying. This is the pattern operators in a substrate transition most consistently get wrong, and it runs in both directions. The people who dump the old business too early leave money on the table. The people who hold it too long ride it into the ground. DVD-by-mail generated meaningful profit for Netflix for years after streaming became the narrative. Cable continues to generate cash for the studios deep into the streaming era. Plenty of DotCom-era businesses still generate real profit today on infrastructure that was written off in 2001. The same will be true of a lot of today’s pre-AI workflows and the public companies built on them. The practical question is not whether the legacy business is dying. It is when and how you exit, spin off, divest, or scale down, while still capturing the cash it continues to produce. Stock portfolios work the same way. Sector leaders in today’s economy will continue to compound for years. The trick is knowing which of them are legacy cash generators and which are legacy cost centres, and not confusing the two.

Do not commit to the Blockbuster position. If your cost structure depends on the old substrate continuing, and the preconditions for the new one are closing, you have less time than you think. This is not a reason to panic. It is a reason to reduce fixed commitments that will become overhead once behaviour shifts. The organisations that did worst through the streaming transition were not the ones that saw it coming slowly. They were the ones that saw it coming and still could not free up the capital to respond.

Build the harness, not the hero bet. The durable value in the streaming era accrued to the companies that built defensible layers around their content. The recommendation engine, the delivery network, the subscriber relationship, the originals model. The same applies now. Proprietary data, domain-specific workflows, and the institutional learning encoded into tooling are where compounding happens. Betting on which foundation model wins is the wrong-shaped risk. Building the harness that works with whichever model wins is the right-shaped one.

Protect optionality. In 2007 the right bet was not to abandon DVDs for streaming. It was to run both, build subscribers on the one that paid now, and be ready to shift weight when the conditions closed. That required carrying some cost you did not need yet, which felt expensive until it turned out to be the only thing that kept you in the game. The current AI equivalent is keeping your human processes strong while building AI-augmented ones alongside them. Not one at the expense of the other.

Build through the rocky part. Every substrate transition has an interval where the direction is obvious, the reliability is not there yet, and the returns on current products look poor. The people who came through the DotCom era with businesses intact, and the people who came through mobile positioned for the next decade, kept building through that interval. They did not swing for the fences. They compounded.

If I had to compress everything in this series into one line, it would be the last of those. Substrate transitions reward durability over heroics. Stay in the game. Watch the preconditions. Move when they close. Do not shoot for the moon. Shoot for being the operator still standing when the next version of normal arrives.

The Next Interface Is Not This One

The streaming story’s clearest contribution to thinking about AI is this: the first winning interface is rarely the form a technology takes when it actually reshapes behaviour.

DVD-by-mail was not a primitive version of streaming. It was a genuinely distinct product that solved a genuinely distinct problem. Its success built the infrastructure, the subscriber base, the recommendation capability, and the consumer relationship that streaming later inherited. When streaming matured, it was not starting fresh.

The chat interface for AI may follow the same path. Not a degraded form of what comes next, but a distinct product that is building real familiarity with AI assistance, establishing which tasks AI handles reliably, and creating the institutional momentum the next form will inherit. When ambient, workflow-integrated AI matures, it will not start fresh either.

What that next form looks like is genuinely uncertain. The most plausible direction, watching what is already happening at the edges of developer tooling and enterprise software integration, looks more like the connected TV than like the current chat window: present in the environment where work already happens, requiring no mode switch to access, able to see context without being explicitly given it. That is the couch equivalent. That is where the behaviour becomes ambient rather than deliberate.

That shift, when it comes, will feel sudden to anyone not watching the checklist close. It will feel like an obvious continuation to anyone who was.

Organisations that dismissed streaming in 2008 on the grounds that it was genuinely not yet reliable for most of what their customers wanted were making an accurate near-term assessment. They were wrong about the pace at which the checklist would close and wrong about what their cost structure could absorb once it did.

The internet did not change how people watch things when streaming launched. It changed how people watch things when the stream reliably reached the couch. The question worth asking now is what AI’s couch is, and how far away it actually is.

Further Reading

- Netflix 2008 Annual Report (SEC EDGAR). Primary source on the first-sale doctrine problem and why DVD and streaming remained complementary for longer than the retrospective accounts suggest.

- Netflix 2010 Form 10-K. The clearest primary evidence of the viewing crossover, when a majority of subscribers were watching more through streaming than DVD.

- Netflix October 24, 2011 Shareholder Letter. Essential for understanding the Qwikster pricing and brand shock, hybrid economics, and why usage growth and managerial calm did not move together.

- Pew Research Center, Home Broadband Adoption Reports (2007, 2010, 2013). The most reliable independent baseline on household connectivity readiness through the transition.

- Sandvine Global Internet Phenomena Report, Spring and Fall 2011. The clearest evidence that streaming had become a defining feature of how the internet itself was being used, with Netflix at 29.7 percent of North American peak downstream traffic in spring and 32.7 percent by fall.

- Leichtman Research Group, Connected TV Report (2014). The best compact source on the connected-TV penetration timeline that tracks the behavioural hinge more accurately than raw broadband figures.

Postscript

After this went live, Joe Lazar pushed back on LinkedIn that the streaming end state was fragmentation, not a single Netflix win. Households now run five to seven subscriptions whose combined cost approaches cable, and AI is already there. I currently run Claude, ChatGPT, and Copilot subscriptions concurrently, plus API access to several other models. He is right that the outcome reorganised cost without consolidating it. I still call Netflix the out-and-out winner. They built the substrate, defined the model, and forced every traditional studio onto streaming-native economics. Disney+ has reached profitability. The rest are mostly also-rans now being absorbed by the consolidation already discussed above. Either way, the companies that failed to adapt died. Substrate transitions reshape who pays for what. They do not always reduce the bill.